Imposing a temporal logic structure on LLM generation

[Dec 2022]

Live demo ↗ (you will need a GPT-4 API Key)

My Contribution

- Helped implement neccessary functions that communicates between Javascript and GPT4 on the demo website

- Helped design the predicates in temporal logics for verifying the TSL-LLM structure

- Wrote Python to track the robustness of LLM content under the assumptions of TSL

Project Summary

1. Motivation

Large Language Models are amazing. But the content they generated are bad at remaining consistent, have little sense of arithmetics, and worse, hard to maintain and track. Replicating previous success often means copying a template prompt, which isn’t ideal for more complicated systems.

Temporal logic specifications can be used to synthesize reactive systems by writing high-level descriptions of desired behavior, without the need to manually program a complete system.

In this work, we investigate how Temporal Stream Logic (TSL), a temporal logic specification language, can be used to instruct LLM for generating content that is temporally consistent. This system is also easy to modify and maintain due to its modular design. We chose a choose-your-adventure-game as a testbed to evaluate the usability of our system and outline future directions for advancing the collaboration of LLM and reactive synthesis.

2. How it works

2.1 Intuitively

We use TSL to specify what we what to happen in the adventure story, and the temporal relationships between these events. For instance, we want the player to only get to the Cave (some more advanced area) after they already visited the Market and the Town (less advanced area). We don’t care how many times player stumble on the Market or the Town, as long as that eventually when they get to the Cave, they already been to the pre-requisite places. As a narrator / designer, the sequence or priority of events matter.

2. 2 TSL - separating Control and Data

- function and predicate terms are calls to an LLM

-

TSL is structuring these calls over time

-

This gives us formal guarantees about the high level structure of the story, while leaving the details to the LLM

3. Specification

- Assumptions

initially assume{

! inCave passage;

! inMarket passage;

! inTown passage;

}

always assume {

//we wont hallucinate a cave, but we might accidentally end up in town or at a market

[passage <- toCave summary] <-> F inCave passage;

[passage <- toMarket summary] -> F inMarket passage;

[passage <- toTown summary] -> F inTown passage;

}

- Guarantees

guarantee {

//you cant go to the cave until you have been to town and the market once

(! (inCave passage)) W (inMarket passage);

(! (inCave passage)) W (inTown passage);

}

always guarantee {

//eventually, always visit all the places, and keep summary up to date

[summary <- updateSummary passage];

F inCave passage;

F inMarket passage;

F inTown passage;

}

4. Function and predicate terms

-

function terms

toCave, toMarket, toTown -

prompts that try to send the reader to the given location

- e.g. “Compose a passage where the reader visits a market to buy supplies.”

- predicate terms:

5. Communication with GPT4

let response = callGPT4(“Read this passage in an adventure story. Is the main character in a market or not? Respond '0' if it is false, or '1' if it is true.", passage)

return response.includes("1");

6. Challenge

6.1 Cost of synthesis and API calls

This is really expensive:

[summary <- updateSummary passage];

We can have nice strategies for updating context and put bounds on cost:

(townVisits % 3 = 0) -> [summary <- updateSummary passage];

inTown passage <-> [townVisits <- townVisits + 1];

inMarket passage <-> [summary <- updateSummary passage];

[summary <- updateSummary passage] <-> [summaryCalls <- summaryCalls +1]

inCave passage <-> summaryCalls <= 3

inCave passage -> [summaryCalls <- 0]

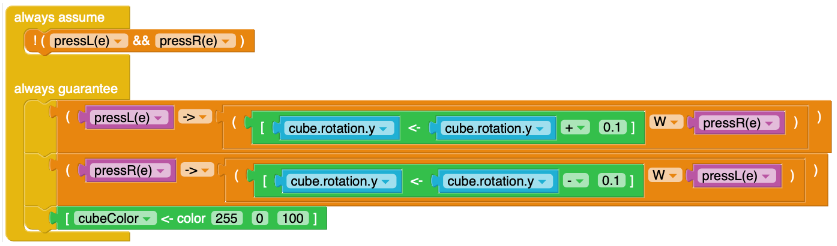

6.2 IDE support

How do we get users to write specifications effortlessly?

Previous efforts: Block-based visual programming link to paper↗

7. Future directions

-

Flexibility with constraints on function and predicate terms

-

More transparency in synthesis result

-

More use of generative power of synthesis

-

More data driven development/research decisions

-

...

Raven Rothkopf, Angel Leyi Cui, Hannah Tongxin Zeng, Arya Sinha, Mark Santolucito

PL Lab

Programing Languages Lab ↗

Barnard Department of Computer Science

PL Lab

Programing Languages Lab ↗

Barnard Department of Computer Science